Space tech. Robots. Data centers. AI. Water. Environment. Chips.

And what awaits AI's future in the skies, you will find it all in this article.

§1. Rate limits

I got an email recently. If you pay Anthropic $200 a month, you probably got it too. Tucked behind a friendly product update was the actual news:

The replies were what you'd expect. Cancellations. People doing the math on what $200 a month actually buys you when you can blow through a Max plan much faster than it was before, not just during peak hours, like they suggested.

I've been on Claude Code daily for a long time. I have 12,500+ commits to my name. Many people like me have felt a drastic change in the last few weeks, where you can't stop staring at your usage screen, whereas Claude used to feel a ton more abundant.

Most coverage framed this as a product decision. And then people thought it was bugs. It wasn't.

The problem is a lack of data centers. And subscribers sharing the same data center bandwidth with much more users. Thus, getting a lot less.

Anthropic just didn't scale fast enough to keep up with the growing demand, even though it had become foreseeable a couple of months ago. That's the elephant in the room that they refused to share publicly.

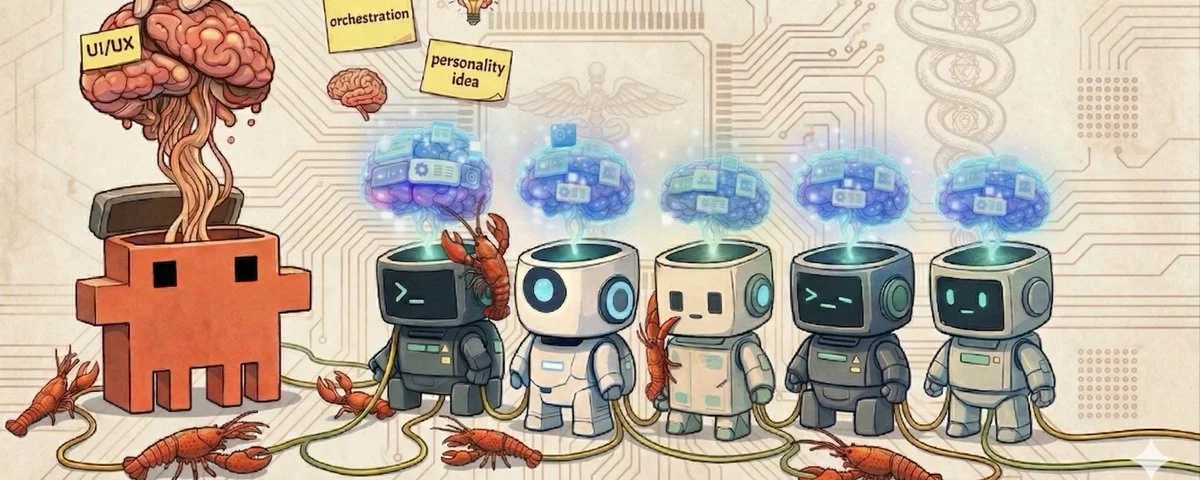

This article explores what happens next, the importance of scaling in AI's future, and how space mining, robots, satellites, and solar technologies will shape it.

§2. The trillion-dollar commitments

Before we talk about what's leaving Earth, let's talk about what just happened within OpenAI, too.

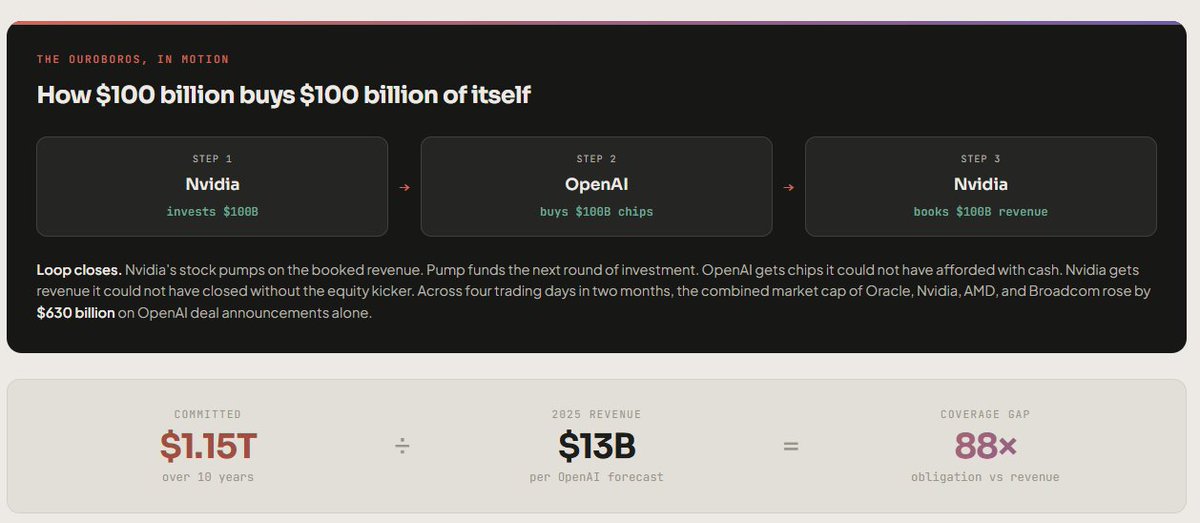

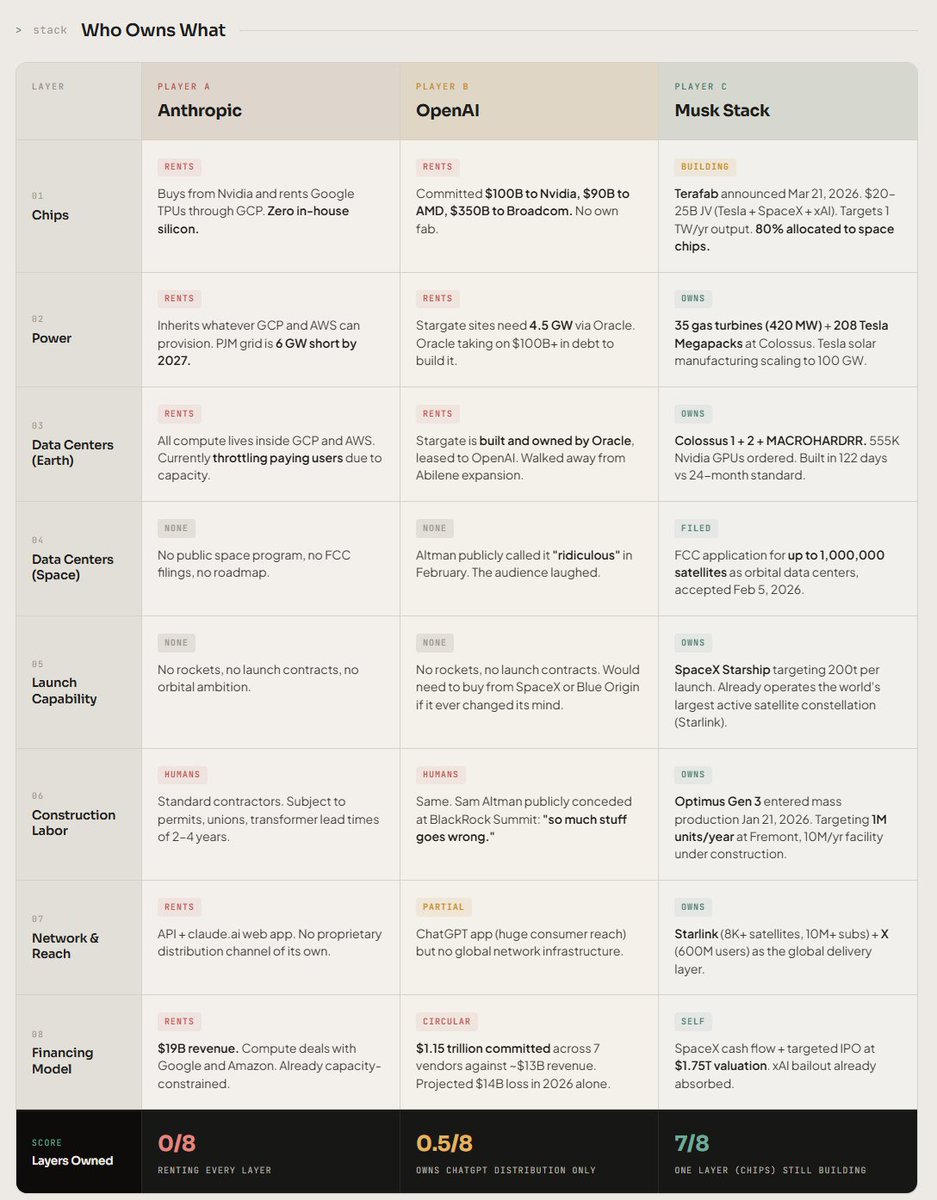

In the last twelve months, OpenAI signed seven deals committing the company to $1.15 trillion in compute and infrastructure spending between now and 2035. Here is the breakdown that surfaced when Tomasz Tunguz pieced it together from public filings:

> Nvidia is investing $100 billion into OpenAI, which OpenAI will then use to buy $100 billion of Nvidia chips. > OpenAI is bankrolled by the seller to buy Nvidia's product. > Nvidia books the revenue. > Nvidia's stock pumps.

Loop closes, however, there are rumors that OpenAI is promising %17.5 annual ROI to the investors.

That is a risky bet while they need to scale fast and not play too safe, like Anthropic: it almost feels like they are at the two ends of the risk spectrum, both might be in danger.

Anthropic: losing customers and sentiment as they don't scale fast enough, also risk not being able to have enough compute for training models at a faster rate anymore

OpenAI: needing to grow the profitability at some point, although (1) competition is always a risk factor, (2) wildcards like local AI becoming very optimized, and (3) Elon Musk's space expansion becoming the unexpected dark horse, which may very well win the AI race, can affect OpenAI's profitability in the long term.

Here's where the math actually breaks. Even with a hyper-optimistic margin curve, Tomasz Tunguz estimates that OpenAI would need to grow revenue from ~$13B in 2025 to $577B by 2029 to support the spending implied by these contracts.

That's roughly the size of Google's entire current revenue.

By 2029.

Two new things happened inside the last week that confirm the pressure they see from not expanding their data centers fast enough is real:

1) OpenAI just closed a $122 billion funding round at an $852 billion valuation, anchored by Amazon, Nvidia, and SoftBank, with continued participation from Microsoft. It's the largest private funding round in tech history.

2) OpenAI is now quietly walking back its compute spending projections from the $1.4 trillion Altman defended in front of investors to roughly $600 billion through 2030. That's a 57% cut. It's OpenAI itself admitting that the obligations the company signed in 2025 are no longer sustainable.

The entire frontier AI competition will be about data centers, and the math behind it comes with an inherent question:

Who can scale data centers with minimal cost? Because more data centers mean: more training, better models, more compute for users, and winning the AI race if you can become much more resourceful than all the competition out there.

That winner will likely be the one with the tech to deliver that resourceful scaling.

Someone who seems to have already created all the necessary tech.

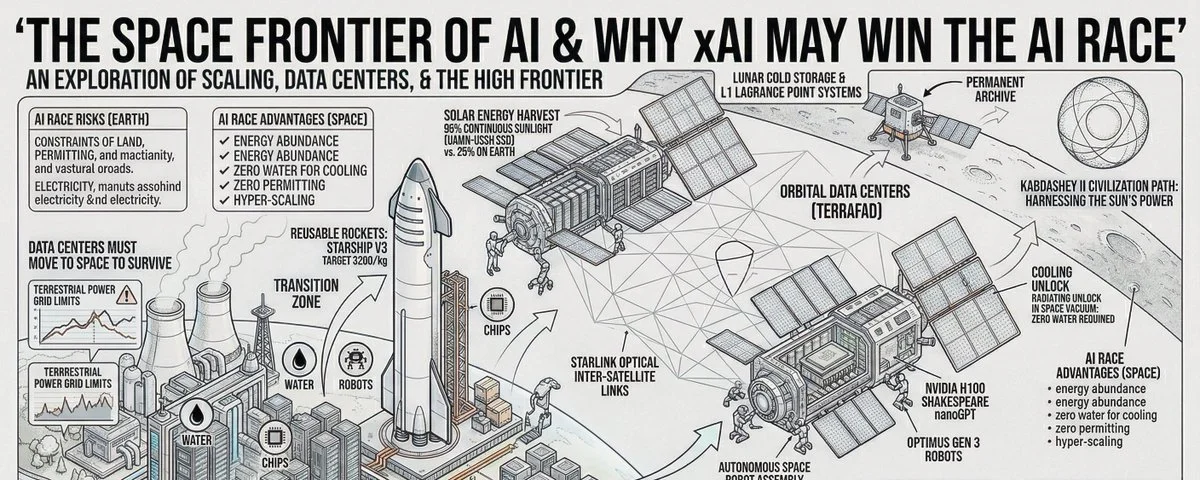

- Robots: for building - Solar panels: for energy - Reusable rockets: for space transportation

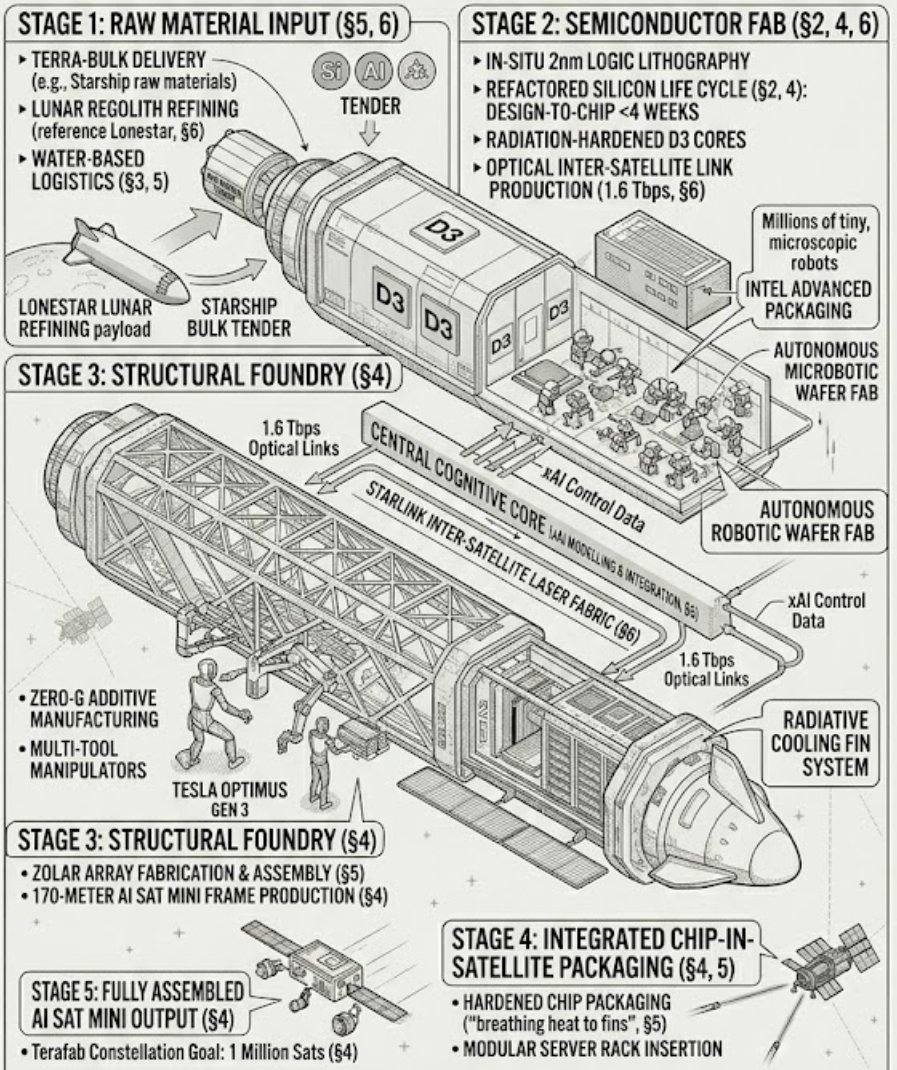

and many more key inventions that glue all this together with one more key infra in the works now =Terafab

§3. Earth is out of room

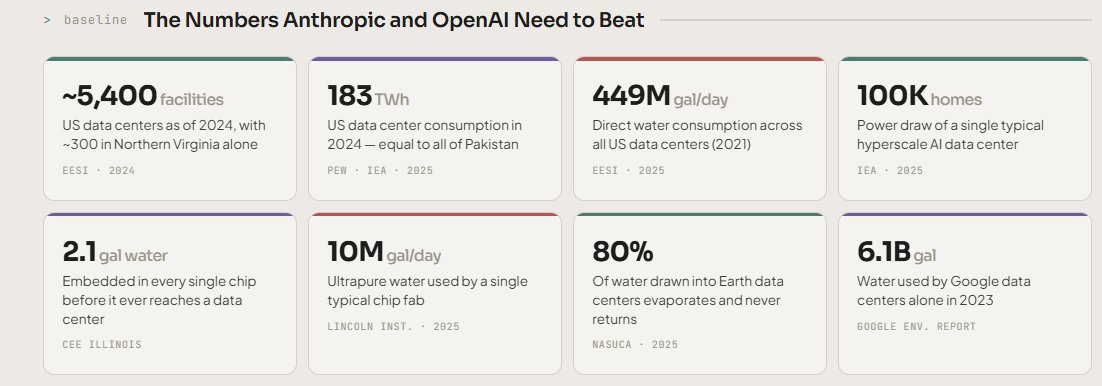

You can have all the money in the world. You still can't conjure up gigawatts of electricity in less than a decade.

This is the part that doesn't show up in the Altman keynote.

You can't permit a new gas plant in three years. You can't build a transmission line in five. Transformer lead times are now two to four years. The power doesn't exist, it can't be willed into existence on AI's timeline, and the regulators saying so are not partisan:

- Bernie Sanders and Ron DeSantis are both opposing data center buildouts

- Trump's home county in Palm Beach killed a 200-acre data center after local opposition

- Aragon, Spain has protests in the streets

- Dublin, Ireland put a moratorium on new connections, because data centers already consume 79% of the city's electricity

- Northern Virginia is at 26% of the state grid and still climbing

This is the world Anthropic and OpenAI are betting will keep getting bigger.

It won't. And if it does, it will eat the world alive. Because of the environmental havoc it will cause.

§4. The five-day decision

What happened in February 2026 is important:

February 2. SpaceX announces it has acquired xAI in an all-stock transaction. The combined entity is valued at approximately $1.25 trillion. Reuters described it as the largest M&A transaction ever.

The deal structure was a share swap: xAI investors received 0.1433 SpaceX shares per xAI share, with some executives offered cash-out at $75.46/share. No new outside capital.

Key note: SpaceX and xAI serving to the same mission

February 5. Three days later. The FCC's Space Bureau formally accepts a SpaceX filing for review.

The filing, submitted on January 30, 2026, requests authority to deploy up to one million satellites functioning as orbital data centers in low Earth orbit at altitudes between 500 and 2,000 kilometers.

The phrase "up to one million" is in quotation marks because that is the literal number SpaceX submitted to the United States government.

Looks like Amazon doesn't like the news, though, as this plan will make a new winner in the data center race. That can negatively impact the future of Amazon and AWS.

They already have about 10,000 Starlink Satellites in orbit. That's100X more satellites, and I reckon most of the building will be done via robots to scale the operations, via the Tesla Optimus.

For context: there are currently about 15,000 active satellites in Earth orbit total, including everything ever launched by every nation in history. Starlink itself is reaching 10,000.

The filing contains the math. It contains the physics. And it contains, in the actual text of the document SpaceX submitted to a federal regulator, this sentence:

"Freed from the constraints of terrestrial deployment, within a few years the lowest cost to generate AI compute will be in space. This cost-efficiency alone will enable innovative companies to forge ahead in training their AI models and processing data at unprecedented speeds and scales."

It also contains this phrase, which I read three times to make sure I was quoting it correctly:

"Launching a constellation of a million satellites that operate as orbital data centers is a first step towards becoming a Kardashev II-level civilization, one that can harness the Sun's full power."

§5. The physics is the entire argument

Here is why this isn't science fiction.

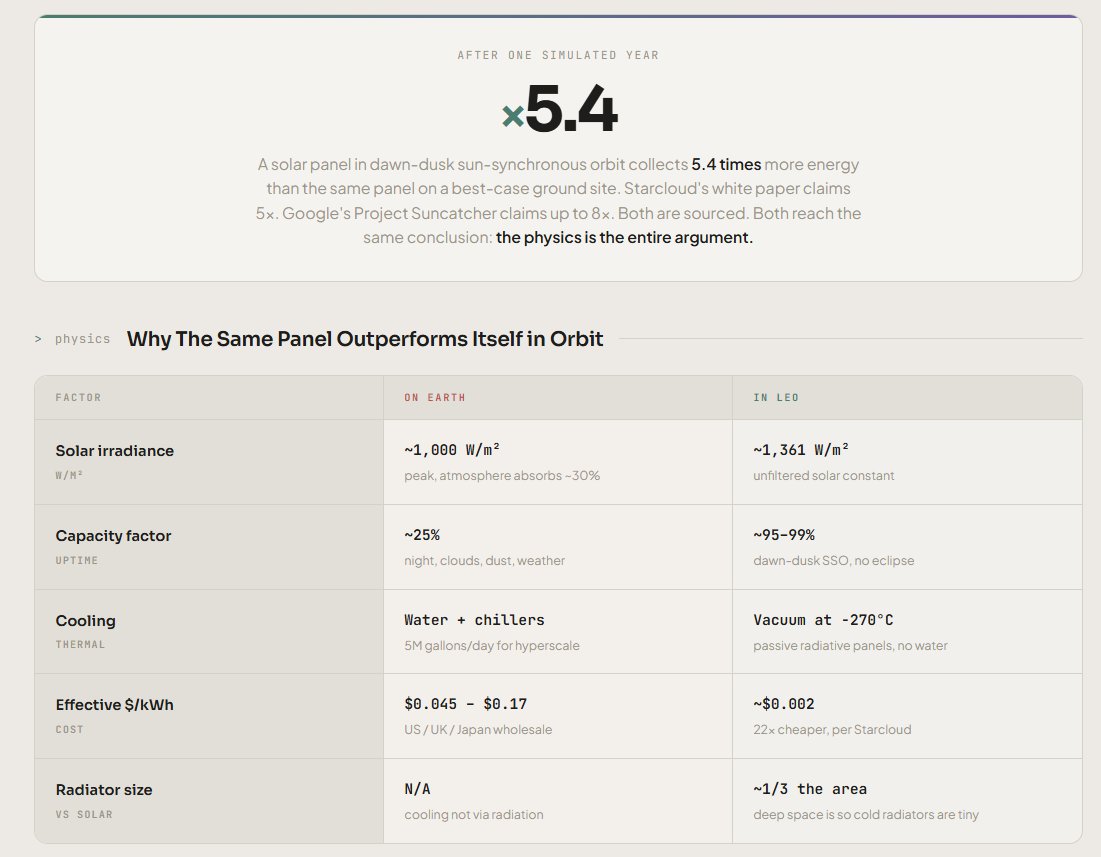

Solar panels in low Earth orbit, in the kind of dawn-dusk sun-synchronous orbits SpaceX, Google, Bezos, and Starcloud have all chosen, generate roughly eight times more energy per square meter than the same panel on the ground in mid-latitude.

The reason is brutally simple:

- No clouds. No weather attenuation, ever.

- No atmosphere. No air mass losses on the way down.

- No nighttime. In dawn-dusk SSO, the satellite is in continuous sunlight, 24 hours a day, 365 days a year, for years.

- Capacity factor approaches 99%. On Earth, even the best desert solar farm with battery backup runs at 20 to 30% over a year.

When you give a satellite 5 to 8x more usable solar energy and remove the diurnal cycle, the grid problem disappears entirely.

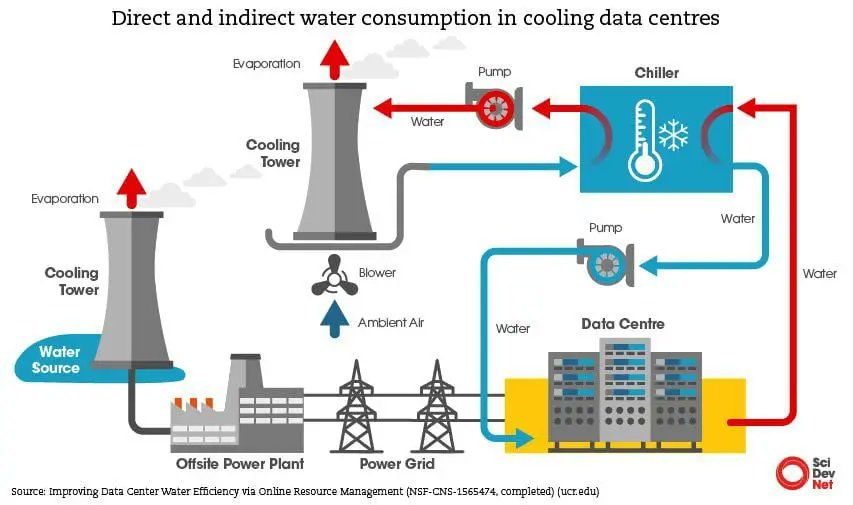

The cooling problem is the second one. Every gigawatt of AI compute on Earth requires gigawatts of cooling capacity, which requires water, which is why Northern Virginia's data centers consume billions of gallons annually.

In space, this dynamic doesn't exist as it's pretty cold out there... So, environmentally, having AI data centers in space is one of the biggest unlocks to solving Earth's water problem, which will only worsen if AI ops do not move to space.

And the third one is land. There is no permitting. There is no Aragon protest. There is no county commissioner's hearing.

When Sam Altman called the entire concept "ridiculous" in late February 2026, his argument was the cost of launch:

"If you just do the very rough math of launch costs relative to the cost of power we can produce on Earth, not to mention how you are going to fix a broken GPU in space, we are not there yet."

He's not wrong about today's launch costs if you think that robots and renewable energy, and reusable robots, and space mining don't exist.

But they do, and it's all owned by one single person, who is interestingly the person from whom he yoinked OpenAI, turning it into a profit org, while getting the initial investments as a non-profit directly from Elon Musk.

Falcon 9 is already at roughly $3,600/kg to orbit. Starship V3, which is scheduled for its first integrated test flight in roughly four weeks from when I'm publishing this article, is targeting $200/kg.

When the cost of getting one kilogram of chip to orbit drops from $3,600 to $200, every cost equation in this article inverts.

Sources: Google Research blog and preprint (Nov 4, 2025), AI Certs News (Nov 25, 2025), Introl Orbital Race report (Feb 21, 2026), Fox Business on Altman quote (Feb 23, 2026), TechCrunch on launch economics, The Next Web on space data center physics.

§6. It's already happening

This is the part I have to repeat slowly because it sounds like I'm making it up.

On March 6, 2025, a hardware data center landed on the Moon.

Lonestar Data Holdings put a Microchip RISC-V processor and a Phison 8TB Pascari enterprise SSD inside a 3D-printed casing designed by Bjarke Ingels.

They strapped it to Intuitive Machines' Athena lander on a Falcon 9.

The lander reached the lunar surface and toppled. The Phison hardware survived the fall intact.

The mission ended within roughly 24 hours because the toppled lander couldn't generate enough solar power.

For the time it operated, it was the first physical data center to ever sit on the lunar surface.

Lonestar's next mission is a multi-petabyte system at the Earth-Moon L1 Lagrange Point, scheduled for 2027. The capacity is already sold out.

On November 2, 2025, the first Nvidia H100 GPU reached low Earth orbit.

Starcloud-1, a 60-kilogram satellite about the size of a small refrigerator, launched on a SpaceX Bandwagon-4 mission carrying a single H100 chip.

It is, per Nvidia's own statement, 100 times more powerful than any GPU compute that had ever been placed in space.

In December 2025, that satellite became the first system to train a large language model in orbit.

The model was nanoGPT, written by @karpathy .

The training data was the complete works of Shakespeare. The result was an LLM that responds in Shakespearean English.

The first message Starcloud-1 transmitted back to Earth was generated by Gemma, running on an H100, in space:

"Greetings, Earthlings! Or, as I prefer to think of you, a fascinating collection of blue and green."

So let me explain simply what's happened in seventeen months in regards to Space Frontier of AI:

- A Microchip RISC-V data center has touched the Moon

- An Nvidia H100 has trained a Shakespeare model in orbit

- Google has filed a peer-reviewed paper, radiation-tested its own chip, and committed publicly to a 2027 launch

- SpaceX has filed for one million satellites

- Starcloud has filed for 88,000

- Blue Origin has filed for 51,600

- Marc Benioff (Salesforce) has called space "the lowest cost place for data centers"

- China has put the Aurora 1000 space computer through 1,000+ days on a Jilin-1 satellite, and is building a 2,800-satellite Star Computing constellation

Investing in Earth-based data centers is extremely risky, as the environment won't be able to keep up with demand, and the water resources won't be enough.

Not scaling enough is also a problem, as we've seen the havoc that rate limit reductions caused for Anthropic.

The only scalable solution is somewhere in the skies.

And whoever has been building for that was playing 4D chess.

If you look at everything Elon Musk has been building, from solar panels to robots, to reusable rockets, to chip factories, to Starlink, they all connect...