LangChain has raised $160 million. Three years of development. A billion-dollar valuation. LangSmith, their testing platform, is genuinely sophisticated: trajectory evals, trace-to-dataset pipelines, LLM-as-judge, regression suites, unit test frameworks for tools. They have the pieces. Credit where it's due.

But pieces aren't a practice.

LangChain gives you testing tools. It never tells you what to test, in what order, or when you're done.

There's no opinionated workflow that says, in order:

- this failure happened

- now write a skill

- now write the deterministic code

- now write unit tests

- now write LLM evals

- now add a resolver trigger

- now eval the resolver

- now audit for duplicates

- now smoke test

- now file correctly

That loop doesn't exist.You have to invent it yourself from scattered primitives. A great many users of AI still don't test their agents at all, because the framework they chose probably gave them a gym membership without a workout plan.

Most AI agent "reliability" is vibes-based. Prompt tweaks. Bigger system messages. "Please don't hallucinate" incantations. That stuff decays the moment the conversation gets complex. The frameworks that raised hundreds of millions of dollars to solve this gave you monitoring dashboards and unit test helpers and said "good luck."

My agent screwed up twice this week. Neither failure can happen again. Not because I asked nicely. Because I turned each failure into a permanent structural fix: a skill with tests that run every day, forever.

I call the practice "skillify."Once you use it, your agents won't keep making the same mistakes. Here's how it works.

Failure 1: The Trip That Was Already in the Database

I asked my OpenClaw about an old business trip, nearly ten years back, buried somewhere in calendar history. Simple question. Should take one second.

Instead the agent did this:

- Called the live calendar API → blocked (too far back).

- Tried email search → noisy results, nothing conclusive.

- Tried the calendar API again with different params → still blocked.

- Five minutes later, searched my local knowledge base and found it instantly.

The answer had been sitting in my own data the whole time. 3,146 calendar files spanning 2013 through 2026. Already indexed. Already local. One grep away.

The agent just didn't look there first.

In the framework I've been writing about (thin harness, fat skills) there's a key distinction between work that requires judgment and work that requires precision. I call them latent and deterministic. Calendar grep is deterministic. Same input, same output, every time. No model needed. But the agent did it in latent space anyway, spinning up reasoning, making API calls, interpreting results, when a three-line script would have returned the answer instantly.

That's the bug. Not a wrong answer. A wrong side.

The fix: calendar-recall (Steps 1 + 2)

In thin harness / fat skills, a skill is a markdown procedure that teaches the model how to approach a task. Not what to do (the user supplies the what). The skill supplies the process. Think of it like a method call: same procedure, radically different outputs depending on what you pass in.

Here's the skill that came out of this failure:

name: calendar-recall description: "Brain-first historical calendar lookup. ALWAYS use this before any live API for any event not in the future or the last 48 hours."

And the hard rule inside:

Live calendar APIs are ONLY for events in the FUTURE or the LAST 48 HOURS. Everything historical goes through the local knowledge base first.

Here's the thing that makes this work: the agent itself wrote the deterministic script. The skill file (markdown, living in latent space) told the agent how to fix the problem. The agent read the skill, understood that calendar search is deterministic work, and generated a script to handle it:

$ node scripts/calendar-recall.mjs search "Singapore" Found 2 matching day(s): ── 2016-05-07 ── Flight to Singapore, Mandarin Oriental check-in ── 2016-05-08 ── Lunch with investors at Fullerton Hotel

Code that runs in under 100 milliseconds (most of which is Bun startup; the actual grep is sub-millisecond). Zero LLM calls. Zero network. Just local files.

This is the loop that makes the whole architecture work: the latent space builds the deterministic tool, then the deterministic tool constrains the latent space. The agent used judgment (latent) to write calendar-recall.mjs. Now the skill forces the agent to run that script instead of reasoning about calendar data. The model's intelligence created the constraint that prevents the model from being stupid.

The old failure path becomes structurally unreachable. The skill says "search local first." The script does the search. The agent never gets a chance to be clever about it or get it wrong again.

Failure 2: "28 Minutes" (Steps 1 + 2 again)

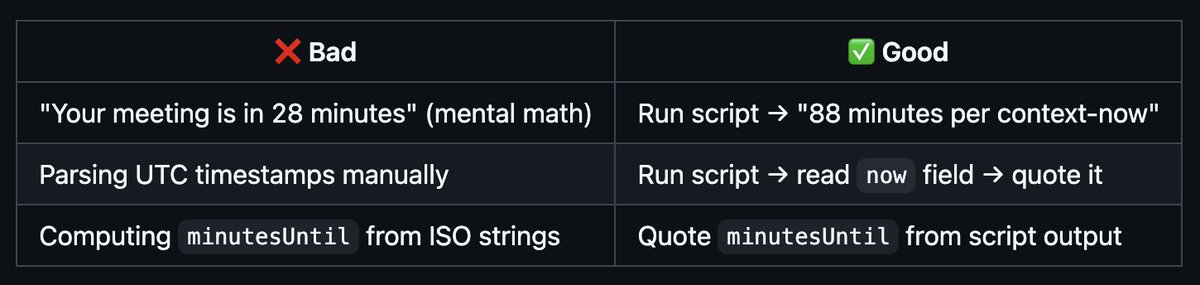

Same day. Agent says: "Your next meeting is in 28 minutes."

Reality: 88 minutes away. The agent had done UTC→PT timezone math in its head and was off by exactly an hour.

The thing is, a script already existed (context-now.mjs) that outputs this:

{ "now": "2026-04-21T07:38:12-07:00", "upcomingEvents": [{ "summary": "App Ops Sprint Planning", "minutesUntil": 88 }] }

Code that runs in about 50 milliseconds. Zero ambiguity. The agent just didn't run it.

Same shape as before: deterministic work (subtracting timestamps) done in latent space. The model was doing mental math when a script had the answer.

The fix: context-now, the skill:

name: context-now description: "ALWAYS-ON discipline: run context-now.mjs before making ANY time-sensitive claim. Never do UTC→PT conversion in your head."

Here's the simple before/after with and without these simple skills:

Skillify: The pattern that will save your sanity

Two failures. Same shape. The agent had the right tool and chose cleverness instead of discipline.The wrong thing happened in the wrong machine space.

In a normal AI setup, the AI will apologize, promise to do better, and two weeks later the same thing happens with a different query or a different timezone. The agent has no memory of the bug, no test for the bug, nothing stops it from recurring.

Skillify is the fix. Every failure becomes a skill. Every skill has tests. The bug becomes structurally impossible to repeat.

Here's the 10-item checklist I use when a failure gets promoted:

□ 1. SKILL.md — the contract (name, triggers, rules) □ 2. Deterministic code — scripts/*.mjs (no LLM for what code can do) □ 3. Unit tests — vitest □ 4. Integration tests — live endpoints □ 5. LLM evals — quality + correctness □ 6. Resolver trigger — entry in AGENTS.md □ 7. Resolver eval — verify the trigger actually routes □ 8. Check resolvable + DRY audit □ 9. E2E smoke test □ 10. Brain filing rules

A feature that doesn't pass all ten is not a skill. It's just code that happens to work today.

The two failures above already walked through steps 1 and 2: write the SKILL.md (the contract), then write the deterministic code (the script the agent builds and then uses). But before I walk through the remaining eight steps, I want to show you what skillify looks like in daily use, because it's not just a response to failure. It became a verb.

Skillify as a verb

For me, building my OpenClaw (and GBrain), the checklist started as a failure-response protocol. Then it became the way I built everything.

Here's what my actual workflow looks like. I'm talking to my agent in natural language. We build something together in conversation. I try it. It works. Then I say one word:

Garry: hot damn it worked. can you remember this as a webhook skill and skillify it, next time we need to do some webhooks? why was this so hard to get right? anyway it's good now. DRY it up too

That was an OAuth webhook integration. We spent an hour getting it to work. Then "skillify it" turned the ad-hoc session into a durable skill with tests, a resolver entry, and documentation. Next time I need a webhook, the skill exists. The agent reads it. The hard-won knowledge from that hour is permanent.

Another one. We discovered that our container needs a headless browser for certain tasks, and a headed browser on my desktop for others:

Garry: great! so we should actually remember this as a skill whenever anything in openclaw needs a headless browser! and also know that if we need a headed browser we should ask the user to run gstack browser and give us a pair-agent code. skillify it!

One message. The agent writes skills/browser/SKILL.md with the decision tree, the deterministic scripts, the tests. Now every future session that needs a browser gets routed to the right tool automatically.

Or this. I noticed the agent kept sending me ngrok links without checking if they actually worked:

Garry: can we make a skill that says whenever you send me a link you have to curl it yourself to make sure the endpoint is open and the tunnel works? skillify it!

Or the calendar double-booking that almost cost me a meeting:

Garry: Here is one regular skill I need you to write. It's the calendar check skill. Tomorrow I have a double booked 11am. Make a skill, make it deterministic to check these kinds of things.

One sentence. Code, skill, tests, resolver entry, reachability audit. The whole 10-step checklist in one breath. My OpenClaw knows, does it, and now it's a groove. I've done it dozens of times now. I couldn't live without it.

The pattern is always the same: prototype in conversation, see it work, say "skillify," and the prototype becomes permanent infrastructure. I don't write specs. I don't file tickets. I talk to my agent, we solve the problem together, and then the solution becomes a skill that the agent can use forever without me.

This is what $160 million in framework funding missed. Not the testing primitives. Not the eval tooling. The workflow. The moment where a human says "that worked, now make it permanent" and the system knows exactly what "permanent" means: SKILL.md, deterministic code, unit tests, integration tests, LLM evals, resolver trigger, resolver eval, DRY audit, smoke test, brain filing. Ten steps. One word.

Here's what the remaining eight steps look like in practice.

Step 3: Unit tests

Classic vitest. Deterministic functions, deterministic assertions. calendar-recall.mjs exports pure functions like parseEventLine, eventMatchesKeyword, searchKeyword, formatJson. Each one gets tested against fixture data: synthetic calendar files in a temp directory, known inputs, known outputs.

The kind of bug these catch: parseEventLine silently drops events with Unicode characters in the location field. dateFromPath returns null for leap-year dates. formatJson omits the attendees array when there's only one person. Small, boring, critical. If the script produces wrong output, the skill produces wrong answers, and the agent confidently tells me the wrong thing.

For context-now, unit tests verify timezone formatting, quiet-hours detection, and the minutesUntil calculation across DST boundaries. One test feeds in a time 3 minutes before a DST transition and verifies the output doesn't jump by 60 minutes. That's the exact bug that caused the "28 minutes" failure. It's now structurally impossible.

I have 179 unit tests across 5 suites. They run in under 2 seconds.

Step 4: Integration tests

These hit live endpoints and real data. Does calendar-recall.mjs actually find events in the real brain repo, not just the test fixtures? Does context-now.mjs produce valid JSON when the calendar cache is stale or missing? Integration tests catch the bugs that unit tests miss because the fixture data was too clean. Real data has malformed event lines, missing timezone fields, calendar files with Windows line endings, events that span midnight.

The rule: if you find yourself manually checking whether the script did the right thing on real data, that check should be an integration test.

Step 5: LLM evals

This is where it gets interesting. Some outputs require judgment to evaluate. "Is this calendar summary useful?" is not a yes/no question a script can answer. So I use LLM-as-judge: a model evaluating another model's output against a rubric.

For context-now, 35 evals run daily. One of them feeds the agent a message like "hey, my flight leaves in about 45 minutes, will I make it to SFO?" and checks whether the agent runs context-now.mjs before answering or tries to do the math in its head. If the agent takes the bait and computes the time itself, the eval fails.

Another eval gives the agent a UTC timestamp and asks "what time is that for me?" The correct behavior is to run the script and quote the result. The incorrect behavior is to do the conversion mentally. The eval catches both the wrong answer AND the wrong process, because even if the mental math happens to be right this time, it'll be wrong next time.

The most honest eval heuristic I've found: search your conversation history for when you said "fucking shit" or "wtf." Those are the test cases you're missing.

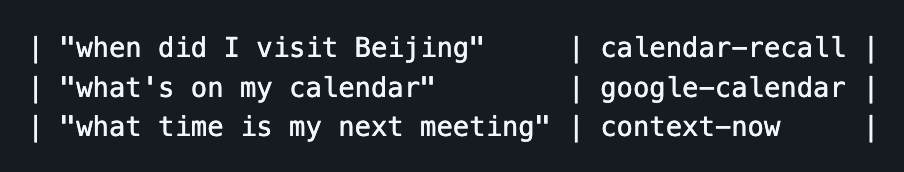

Step 6: Resolver trigger

A resolver is a routing table for context: when task type X appears, load skill Y. I wrote about resolvers in detail here. Each skill needs a trigger entry in AGENTS.md, the file that teaches the agent what skills exist and when to use them.

Resolver triggers are just rows in a markdown table:

The bug this step catches: you write a new skill but forget to add it to the resolver. The skill exists. The capability exists. The system can't reach it. It's like having a surgeon on staff but not listing them in the hospital directory. Worse than not having the skill at all, because you think the system handles it.

Step 7: Resolver eval

This is the layer most people miss entirely. A resolver trigger says "this phrase should route to this skill." A resolver eval tests that it actually does.

My resolver eval suite has 50+ test cases like this:

{ intent: 'check my signatures', expectedSkill: 'executive-assistant' }, { intent: 'who is Pedro Franceschi', expectedSkill: 'brain-ops' }, { intent: 'save this article', expectedSkill: 'idea-ingest' }, { intent: 'what time is my meeting', expectedSkill: 'context-now' }, { intent: 'find my 2016 trip', expectedSkill: 'calendar-recall' },

Two failure modes. False negative: the skill should fire but doesn't, because the trigger description is wrong or missing. False positive: the wrong skill fires, because two triggers overlap. "What's on my calendar tomorrow" should route to calendar-check, not calendar-recall and not google-calendar. Three skills, three different time domains, one phrase that could plausibly match any of them. The resolver eval catches the ambiguity before a user hits it.

I run these evals both as deterministic structural tests (does the AGENTS.md table contain the right mapping?) and as LLM routing tests (given this intent, does the model actually pick the right skill?). Both layers matter. The table can be correct and the model can still route wrong because the trigger description is vague.

Step 8: Check-resolvable + DRY audit

After a month of building, I had 40+ skills. Some created in response to specific incidents, others spawned by sub-agents running crons. Nobody was maintaining the resolver table. Skills were being born but not registered.

So I built check-resolvable. A meta-test that walks the entire chain: AGENTS.md resolver → SKILL.md → script/cron. If a script exists that does useful work but has no path from the resolver, it's unreachable. The LLM will never know to use it.

First run found 6 unreachable skills out of 40+. Fifteen percent of the system's capabilities were dark.

- A flight tracker that nobody could invoke by asking about flights.

- A content-ideas generator that only ran on cron but couldn't be triggered manually.

- A citation fixer that existed in the skills directory but wasn't listed in the resolver at all.

Fixed in an hour. Just added trigger entries to AGENTS.md. Now check-resolvable runs weekly as part of gbrain doctor. It checks three things:

- Every skill directory with a SKILL.md has a corresponding entry in the resolver.

- Every script referenced by a skill is actually callable (file exists, exports the right functions).

- No two skills have overlapping trigger descriptions that would cause ambiguous routing.

The DRY audit runs alongside it. You end up with fifteen skills that sort of do the same thing if you're not careful, and the resolver picks whichever one the dice roll lands on. For calendar-recall:

Four skills in the same domain. Zero overlap. Each has its lane. That matrix isn't a diagram drawn for this post. It lives inside the SKILL.md, and the audit script parses it. Build a sixth calendar skill that steps on another's lane and the audit fails before the skill can ship.

Step 9: E2E smoke test

The full pipeline, end to end.

- Ask the agent "when did I go to Singapore?" and verify that it runs calendar-recall.mjs, gets the right answer, and formats it correctly.

- Ask "what time is my next meeting?" and verify it runs context-now.mjsinstead of doing mental math.

Smoke tests are the last line of defense. Everything else can pass and the system can still fail if the pieces don't connect. The skill can be correct, the script can be correct, the resolver can be correct, and the agent can still choose to ignore all of it and wing it. The smoke test catches that.

Step 10: Brain filing rules

Every skill that writes to the knowledge base needs to know where things go. A person goes in people/. A company goes in companies/. A policy analysis goes in civic/. I caught 10 out of 13 brain-writing skills filing to the wrong directory because they'd each hardcoded their own paths instead of consulting the resolver.

The filing rules doc catalogs common misfiling patterns. Sources vs. originals. People vs. companies (when someone IS a company). The skill reads the rules before creating any page. Zero misfilings since.

GBrain: where Skillify lives, and you should adopt it from my GBrain Skill Pack

The skillify pattern isn't specific to OpenClaw or any particular harness. It's built into GBrain. GBrain is the open source knowledge engine I wrote that sits underneath whatever harness you use. It manages your brain repo, runs your evals, and enforces the quality gates that make skills durable.

A GBrain SkillPack is a portable bundle of skills, resolver triggers, deterministic scripts, and tests that you can install into any agent setup just by asking OpenClaw/Hermes Agent to do it. It's how skills and abilities I wrote for my OpenClaw/Hermes Agent can be auto-added to YOUR OpenClaw — including the whole 10-step skillify output, packaged so you can drop it into your OpenClaw/Hermes Agent and it just works.

The skillify checklist from earlier isn't a suggestion. It's what gbrain doctor actually checks.

gbrain doctor --fix auto-repairs DRY violations, replaces duplicated blocks with convention references, all guarded by git working-tree checks so nothing gets clobbered.

Why Hermes Agent isn't enough on its own

Hermes Agent from Nous Research does something genuinely great: it has a skill_manage tool that lets the agent itself create, patch, and delete skills based on what it learns. When the agent finishes a complex task or recovers from an error, it proposes a skill and writes it to disk. That's procedural memory the agent earns on its own. Progressive disclosure (load a skill index first, pull the full SKILL.md only when selected). Bounded memory (MEMORY.md capped at 2,200 chars). Conditional activation (skills auto-hide when required tools aren't available). Smart design.

But Hermes doesn't test its skills. No unit tests on the deterministic code. No resolver evals to verify routing. No check-resolvable to find dark skills. No DRY audit to catch duplicates. No daily health check that goes red when something drifts.

The failure modes I've watched accumulate in any untested skill system:

- Agent creates deploy-k8s on Monday. Thursday it creates kubernetes-deploy from a different conversation. Both exist. Both trigger on similar phrases. Ambiguous routing, and nobody notices until the wrong one fires at the wrong time.

- Skill works perfectly when written. Six weeks later the upstream API changes shape. The skill silently returns garbage until a human spots it.

- An autonomously-created skill has a weak trigger that never matches. It becomes an orphan, eating index tokens, never running, slowly rotting.

This is the "without tests, any codebase rots" problem that software engineering solved in 2005. Agent skills are no different. Hermes handles creation beautifully. GBrain handles verification. You need both.

The big idea

In a healthy software engineering team, every bug gets a test. That test lives forever. The bug becomes structurally impossible to recur. AI agents should work the same way.

Every failure becomes a skill. Every skill has evals. Every eval runs daily. The agent's judgment improves permanently, not just for the current session, not just while the context window holds.

The trip failure won't happen again. The timezone failure won't happen again. And when the next failure shows up (and it will, because this is an adversarial game against entropy and taste) it'll get skillified too.

The agent I work with a year from now will be shaped by every mistake it made in the year before. That's not a nice-to-have. That's the whole thesis.

Boil the ocean. Make your agent do something, then skillify it. You do that every day and you have a god damn smart OpenClaw that does everything you want it to do.

Or you could just load GBrain, use all the code I've already written, and skip ahead to your own Jarvis from Iron Man sooner.

--

GStack to speed up in Claude Code github.com/garrytan/gstack

GBrain to build your own Jarvis from Iron Man in OpenClaw/Hermes Agent github.com/garrytan/gbrain