so meta just open-sourced a model that simulates how the human brain reacts to a video.

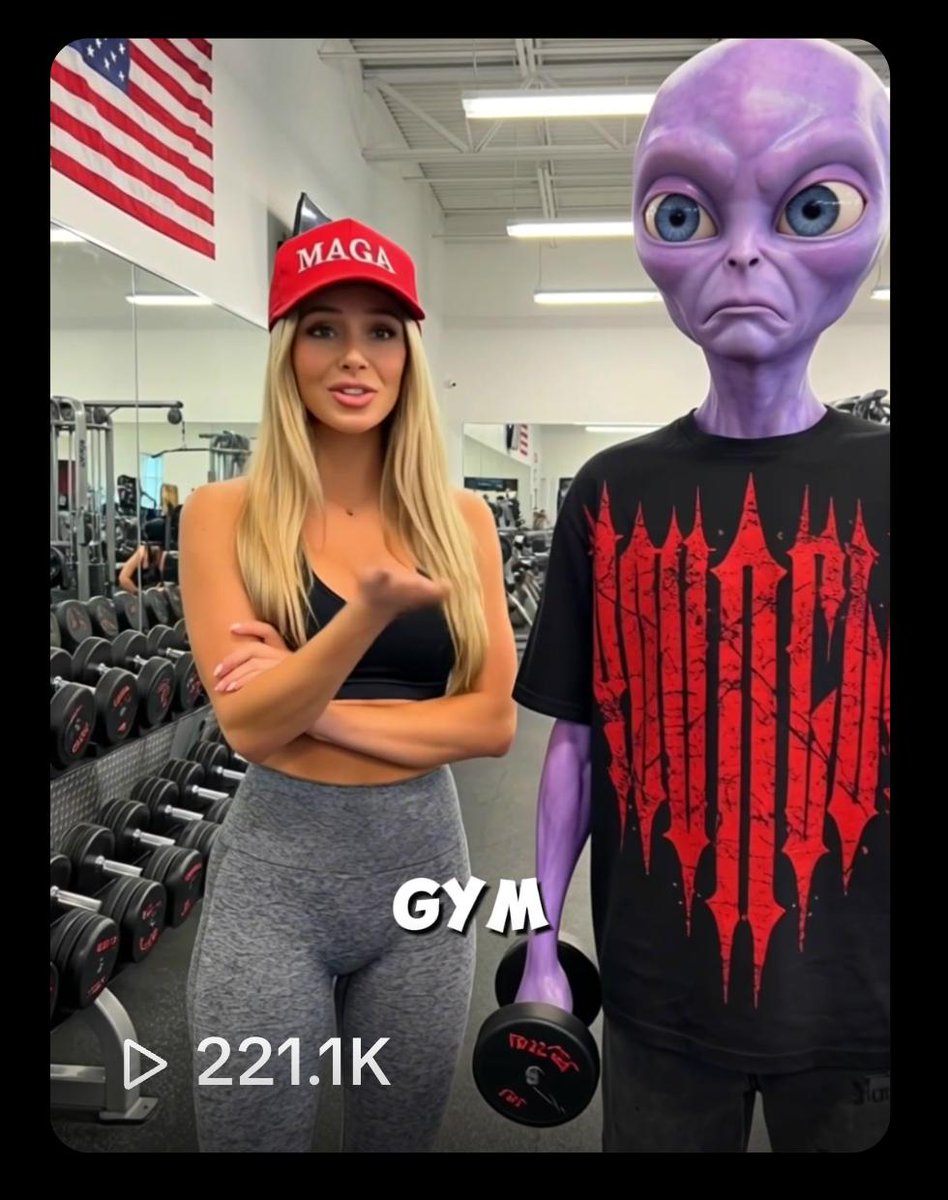

i ran a creator's draft through it, re-edited based on what it showed, and we got 221,100 views.

i found an open-source model that meta's research team published days ago. it simulates how the human brain responds to video, audio, and text. trained on brain scans from 720 real people.

i took one of @affiliatenw's ai ugc creator's video draft (creator: @sammgrowth), ran it through the model, re-edited based on what the simulator showed us, and posted it.

the video got 221,100 views.

here's the breakdown of exactly what it is, how to set it up without writing a single line of code yourself, and how to actually make money from it as a creator:

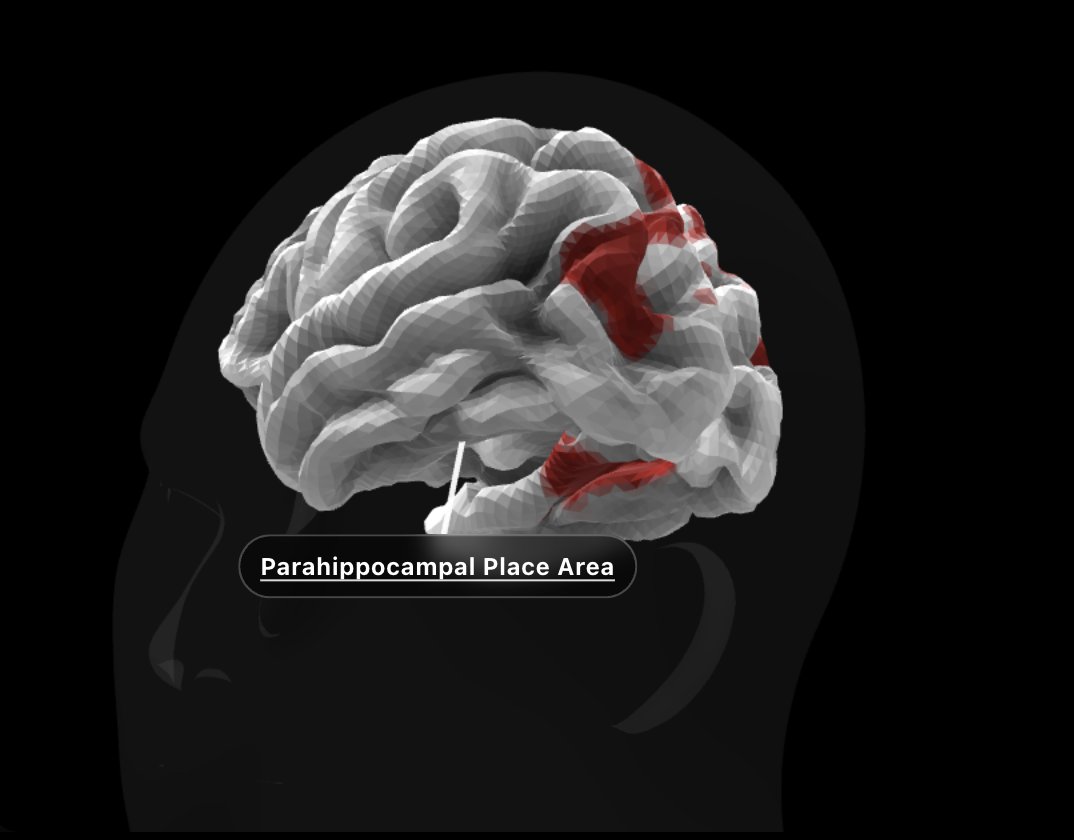

the model is called TRIBE v2. trained on brain scans from 700+ real people watching videos, listening to audio, reading text. it predicts how 70,000 points in your brain light up when you watch something.

you give it a video, it tells you how a human brain would react to every second of that video.

meta's FAIR team trained it on over 1,000 hours of fMRI recordings from 720 volunteers. these people watched movies, listened to podcasts, read text, all while their brain activity was recorded at high resolution.

TRIBE v2 learned the patterns. now it predicts brain responses to media it has never seen before. no humans in fMRI machines needed.

the wild part: meta's own research shows TRIBE v2's predictions are actually more accurate than a single real brain scan. real scans are noisy. heartbeats, movement, device artifacts. the model strips all that out and gives you the clean signal.

they open-sourced everything, it's completely free.

how to set it up (no coding required)

meta made this stupid easy.

they provide a free Google Colab notebook that runs the entire model for you. here's the exact setup:

step 1: open the Colab notebook (linked in meta's TRIBE v2 repo). go to runtime > change runtime type > select T4 GPU > hit save.

step 2: run the pip install cell, then restart your environment.

step 3: you need a Hugging Face account. go to huggingface.co, create one if you don't have it, then go to your profile dropdown > access tokens > create new token. name it whatever you want, give it read access, copy it.

step 4: you also need to request access to the Llama model on Hugging Face (used for the text portion of the input). submit the form, took about an hour to get approved.

step 5: back in the notebook, go to the key icon in the sidebar, add HF_TOKEN and paste your token.

step 6: run the cells. they even include a sample video so you can see brain prediction results immediately.

now you can feed it any video and it tells you exactly where brain engagement spikes and where it flatlines.

watch this simple video to understand the process better: https://www.youtube.com/watch?v=VER-F4wdA9Q

how we get 221,000 views

we did a small experiment with @sammgrowth.

took his already-edited video and ran it through TRIBE v2. the model showed me exactly where brain engagement dropped and where it spiked.

based on the neural data, we re-edited. moved the highest-engagement moments earlier, cut the dead zones where brain activity flatlined, restructured the pacing to keep neural response elevated throughout.

the optimized version showed significantly stronger predicted brain engagement than the original. we didn't spend much time on this since it was just research, but even a quick pass made a clear difference.

we posted it and it gets 221,100 views.

here is the actual video:

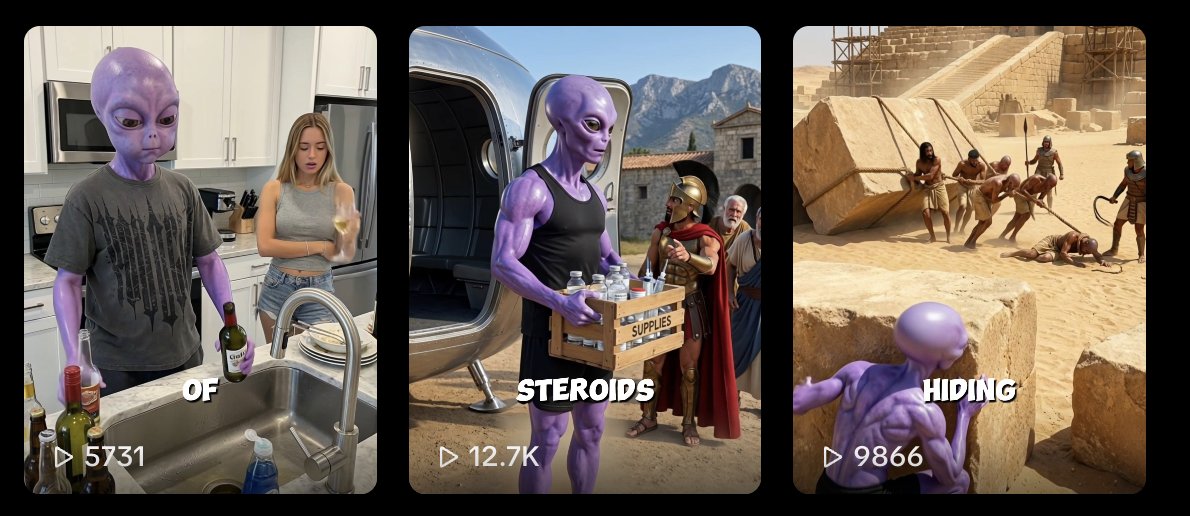

the previous videos were getting way less then that

that's on a single video with minimal optimization effort. imagine what happens when you run every piece of content through this before posting.

how genz can make actual money from this

getting views is good, but how do you actually monetize as a creator?

we’re building the biggest platform for creators who are cracked at virality and want to get paid for it.

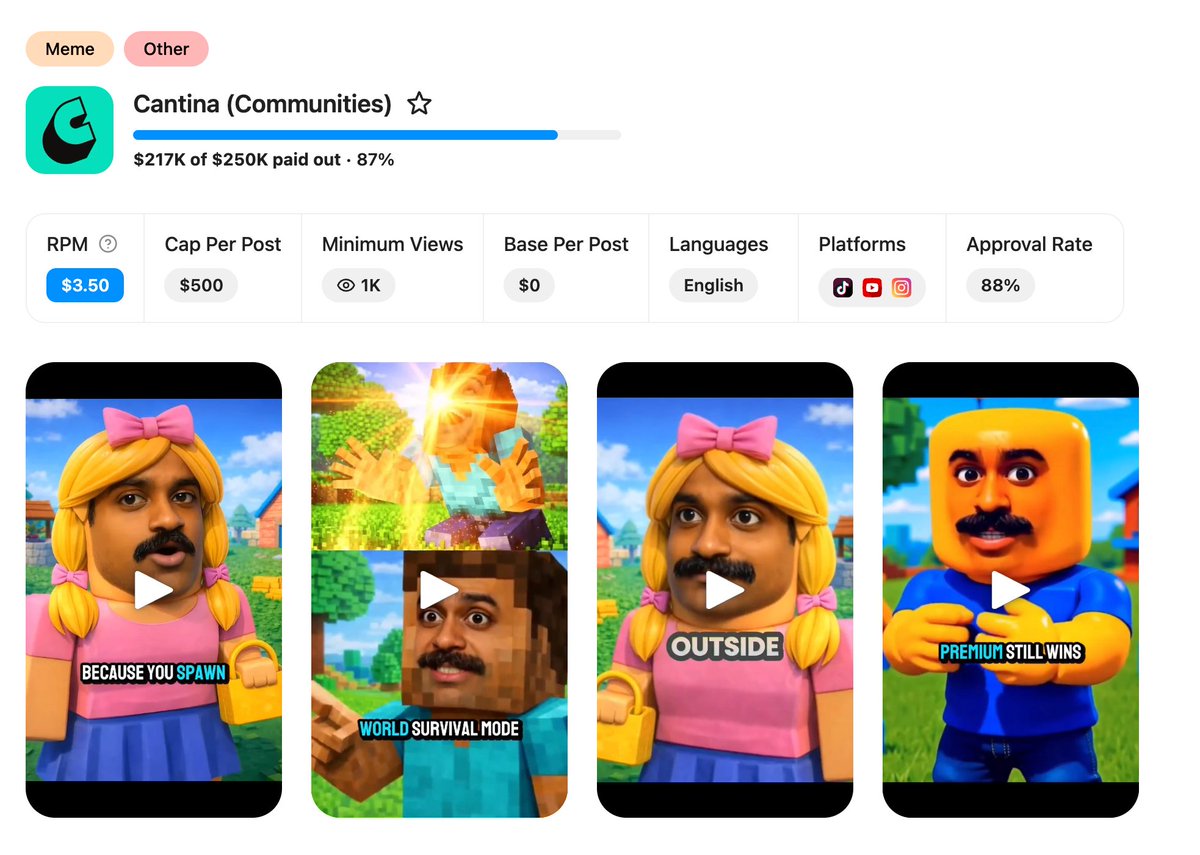

a lot of brands are running campaigns, creators join, post videos and earn per 1,000 views.

here are the exact steps:

- go to affiliatenetwork.com and join any campaign u like. there are campaigns right now paying $1-3 per 1,000 views.

- check the content format the brand wants. create variations. run each variation through TRIBE v2 before posting and pick the version with the strongest predicted brain response.

- at $3.5 CPM, 221,000 views = $773 from a single video. now multiply that across 10 accounts posting daily with brain-optimized content.

we already have creators making $100,000+ on our platform.

use claude to automate the entire prediction pipeline. give it your video files, have it run each one through TRIBE v2, rank them by predicted engagement, and tell you which version to post.

no need for manually checking brain maps. feed in 10 video variations, claude tells you which one gets the strongest neural response, you post that one.

this turns content optimization from an art into engineering. same effort, dramatically better results.

btw, @sammgrowth created his new X account, go and follow him:

---------------

demo: https://aidemos.atmeta.com/tribev2

model: https://huggingface.co/facebook/tribev2

the biggest ai ugc and clipping platform: AffiliateNetwork.com