by the end of this article you'll have a working production stack that generates hyper-realistic ugc content at scale. (this took me 8 months to figure out).

- you'll know which model to use for which shot type.

- you'll have the exact prompt structures.

- you'll understand why most ai video looks fake and how to fix it.

everything below is implementable today.

the character engine (where everything starts)

higgsfield changed the game for me. before i found it, character generation was the bottleneck. i'd generate faces that looked plastic. skin that looked airbrushed. eyes that looked dead.

the breakthrough was understanding that higgsfield's nano banana 2 responds to json prompting differently than text prompting. text prompts produce grayscale color grading. 4-5 colors max. sometimes realistic, sometimes garbage.

json acts as a blueprint. it controls exact color grading, realistic lighting, visual styling details. multiplies output quality by 10x.

here's the exact structure that works:

cost per character image in higgsfield: $0.08-0.09

that json is your foundation. save it. modify the demographic details for different campaigns. the structure stays the same.

Save this article. Come back anytime when you need it.

the color grading fix (pinterest to gemini workflow)

here's what took me three weeks to figure out: nano banana pro oversaturates by default. the colors look off. images come out looking like ai art even when the face is realistic.

the fix is a reference image pipeline.

step one: go to pinterest. search for the aesthetic you want. find 5-7 images with your target color grade. warm lighting. realistic skin tones. the vibe you're going for.

step two: feed those images to gemini with this prompt:

step three: take that output and add it to your higgsfield json prompt as a color directive. the model now references real-world color grading patterns.

the difference is immediate. images go from "obviously ai" to "could be a real photo."

the visionstruct method (for character variations)

one master image is worthless. you need 4-6 scene variations of the same character for any video that doesn't look like a slideshow.

the visionstruct method fixes this. instead of generating random variations, you describe specific poses in specific moments with specific imperfections.

the structure:

pose first. always lead with the physical position. "woman sitting in car driver seat, left hand on steering wheel, right hand holding coffee cup, torso turned 15 degrees toward camera."

then environment details. real environments have clutter. "morning sunlight through windshield creating lens flare at 2 o'clock, takeout bag visible on passenger seat, parking garage concrete visible through rear window."

then the anti-ai tells. this separates amateur from professional output. "slight undereye circles suggesting early morning, 2-3% visible skin texture on forehead, one flyaway hair strand across left eyebrow, asymmetrical smile with left side slightly higher."

total prompt length: 150-250 words. anything shorter lacks the specificity needed for consistency. anything longer and the model starts ignoring tokens.

generate your master reference in higgsfield.

then use img2img from that master to create your scene variations. different poses, different environments, same identity.

model selection (this is where most people waste money)

different models excel at different things. using the wrong model for a shot type wastes credits and produces inferior output.

kling v3 pro: the workhorse for talking head dialogue. voice_ids maintain voice consistency across multiple videos. multi-shot system handles 2 shots per video at 7s + 8s. costs $4.70 per generation via api.

the kling prompt structure that actually works:

the mandatory footer on every kling prompt: "natural blinking, NO over-reaction, no exaggerated facial movements. natural hand gestures. Voice_id: Voice_1. tone: [modifier]. [Shot type], [camera movement]."

512 character limit is hard. cannot exceed it. this forces precision.

veo 3.1: use this for cinematic b-roll and product consistency. ingredient mode locks your character's face and product elements. first-to-last frame mode maintains environment consistency across cuts.

the veo workflow is visual-first. you build around what the scene looks like. upload character base image as ingredient one. upload product photo as ingredient two. write prompt describing action. veo locks both elements while generating.

seedance 2.0: this is bytedance's model. accessed through higgsfield. the @ system is what makes it different.

you upload images, videos, and audio. each gets labeled automatically. @Image1, @Video1, @Audio1. then you reference them in your prompt.

@Image1 as the first frame, reference @Video1 for camera movement, use @Audio1 for background music

you're stealing cinematography from any video and applying it to your own characters. find a winning ugc creative. feed it as @Video1. swap in your character from higgsfield as @Image1. one-click recreation of trending content with your own identity.

the motion transfer use case: @Image1 performs the dance from @Video1. perfect motion with a custom character from higgsfield. this is the pro move.

the multi-shot dialogue system (kling v3 pro deep dive)

kling v3 pro handles dialogue better than any other model right now. the multi-shot feature splits video into different scenes inside the same environment.

critical rule: maximum 2 prompts per generation. more than 2 degrades quality significantly. if you need 4 scenes, split into 2 separate generations and cut together.

the 2.5 words per second rule: a 30-second video gets 75 words max. a 15-second video gets 38 words max. the model compresses any long script into the timing you give. if your dialogue takes 15 seconds to speak naturally and you set 10 seconds of video, lip sync breaks completely.

here's an exact working prompt for a supplement ad:

prompt 1 (7 seconds, 18 words):

Handheld camera with natural shake, woman standing in luxury apartment with windows and skyline. Gray sweatshirt, amber sunglasses. Says "Okay so these gut health gummies changed everything for me." Animated gestures, confident tone. Bright natural light, slight camera movement adds authenticity

prompt 2 (8 seconds, 20 words):

Back to the handheld camera, a woman standing in the same apartment setting with windows. Removes sunglasses revealing excited eyes, leans toward camera. Says "My digestion, and even my skin improved in like two weeks. I'm obsessed." Big genuine smile, enthusiastic hand gestures. Natural lighting, authentic energy, slight camera shake continues.

the filler words matter. adding "Euuhh..." or pauses in dialogue makes it sound natural. real humans say "um" and "like" and "you know." your scripts need these elements.

the @ system (seedance motion transfer)

seedance 2.0 accepts up to 9 images, 3 videos, and 3 audio files. 12 total inputs per generation. output duration: 4-15 seconds at native 2k resolution.

the power is in combining references. upload a photo of your higgsfield character as @Image1. upload a dance video from tiktok as @Video1. prompt: "@Image1 performs the choreography from @Video1."

the model extracts movement patterns from video references and applies them to your character images.

for creative template replication: find a winning ad creative. download it as reference. feed as @Video1. add your higgsfield character as @Image1. add your product as @Image2. prompt: "Replace the person in @Video1 with the character from @Image1. Reference @Video1's camera work, transitions, and editing rhythm. Product from @Image2 replaces original product."

this is the agency scaling play. find what works. replicate the format with your own assets.

for multi-language localization: generate one master video in english. use seedance to apply the same lip movements to audio in spanish, portuguese, german. same visual performance, different language audio that actually syncs.

the 3-shot structure works best: setup (00-05s), action (05-10s), payoff (10-15s).

the voice pipeline (why most ai audio fails)

voice is where most ai ugc falls apart. the cadence is too even. the breaths are too perfect. the emphasis patterns are predictable.

the two-step normalization pipeline fixes this.

step one: capcut voice normalization. import your video. identify every section where the character is talking. use capcut's "voice change" feature. pick any random base voice from their library. apply to all talking sections.

what this does: removes weird accents. removes inconsistencies. removes robotic artifacts. normalizes everything to a clean, consistent base.

step two: elevenlabs voice transformation. take the normalized audio from capcut. upload to elevenlabs. use voice transform. apply one of their realistic voice profiles. the result sounds like a real person recorded on decent equipment.

the key: capcut creates a clean foundation. elevenlabs transforms that foundation into a specific character voice. skip step one and your elevenlabs output sounds inconsistent because the source audio was already mismatched.

room tone layer: before exporting final audio, add ambient room noise at -28db. this fills the silence between phrases and makes the audio feel like it was recorded in a real space.

the anti-ai detection layer

the 35-65+ demographic controls 85% of household spending and has functionally zero ability to detect ai-generated content when it's produced correctly. younger demographics are developing detection instincts.

the anti-ai tells that make content pass:

film grain: 2-3% overlay across everything. ai video is too clean. real phone cameras have sensor noise.

camera shake: 2% movement in the first 1-3 seconds of each scene. this mimics the "picking up camera" moment that real ugc has. then it stabilizes.

filler words: "um" and "like" and "you know" added to scripts. ai-generated scripts have unnaturally perfect flow.

room tone: ambient noise at -28db under all audio. the silence between phrases in ai audio is too clean.

micro-pauses: 0.3-0.5 second pauses between thoughts in dialogue. real humans don't speak in perfect streams.

asymmetry in images: one flyaway hair strand. slightly uneven smile. visible pore texture. ai tends toward perfect symmetry.

the complete workflow (implementation sequence)

workflow one: solo presenter ugc (30 seconds, 4 scenes)

start in higgsfield with nano banana 2. generate master character reference using the json structure. one image, optimized for consistency.

generate 4 scene variations using img2img from your master. different poses, different environments, same identity.

write script at 2.5 words per second. 75 words total for 30 seconds. include filler words.

run through kling v3 pro. video 1: scenes 1-2 (15s, 2 shots). video 2: scenes 3-4 (15s, 2 shots).

assemble in capcut. add film grain. add camera shake to opening frames. run through voice pipeline.

workflow two: motion transfer ad (template replication)

find winning creative on tiktok or instagram. download it.

generate character in higgsfield that matches your target demographic.

upload to seedance. winning creative as @Video1. higgsfield character as @Image1. product photo as @Image2.

prompt: "Replace the person in @Video1 with @Image1. Reference @Video1's camera work and editing rhythm. @Image2 replaces the original product."

generate. run through voice pipeline.

workflow three: multi-language campaign

generate master english video using workflow one.

for each target language: upload original video to seedance as @Video1. generate new audio in target language via elevenlabs. upload as @Audio1. prompt: "@Video1 with lip sync matched to @Audio1."

same visual performance, different language audio.

the failure modes that kill videos

same face in every scene. if you use your anchor character image directly in every scene without generating pose variations, viewers notice. it reads as slideshow even when there's motion. always generate 4-6 pose variants in higgsfield before producing scenes.

exceeding 2 shots per kling generation. quality degrades significantly past 2 prompts. work within the limit and assemble in post.

exceeding the length of kling generation lipsync. don't generate more than 10 seconds. it will start messing up the lip sync if you generate more than that.

perfect audio. no filler words, no pauses, no breaths. add these manually.

missing room tone. silence between phrases is too clean. layer ambient room noise at -28db.

over-prompting. hitting the 512 character limit in kling usually means you're trying to control too much. focus on one primary action per shot.

skipping the two-step voice pipeline. elevenlabs alone with ai source audio sounds robotic. capcut normalization first.

using the same background ingredient across unrelated scenes. viewers pattern-match repeated environments. vary the setting when possible.

cost breakdown

higgsfield nano banana pro image: $0.08-0.09

kling v3 pro 15-second generation: $4.70 (includes multi-shot)

seedance generation: ~$0.50-0.80 depending on complexity

veo 3.1: ~$0.75 per second of output

standard 30-second ugc ad total:

- 4-6 character images: ~$0.50

- 2 kling v3 pro generations: ~$9.40

- voice pipeline: ~$0.10

- total: ~$10 per finished ad

at scale (200+ videos/month):

- batch image generation brings cost per image down

- voice cloning eliminates per-video voice costs

- effective cost per video: $0.38-0.50

traditional ugc costs $150-500 per video for creator fees alone. add editing, iteration, revision rounds: $300-800 per finished piece.

this system produces equivalent output at $0.38-0.50 per video in api costs.

the seedance @ system prompts (exact structures)

basic motion transfer:

@Image1 performs the choreography from @Video1. Modern studio setting. Cinematic 4K quality.

template replication:

Replace the person in @Video1 with the character from @Image1. Reference @Video1's camera work, transitions, and editing rhythm. [Product from @Image2] replaces [original product]. Keep the same energy and pacing.

multi-shot narrative:

@Image1 is the main character. Reference @Video1 for camera movement. Use @Audio1 for rhythm.

Shot 1 (00-05s): Wide establishing shot, character intro, first action Shot 2 (05-10s): Close-up tracking shot, peak action, camera movement Shot 3 (10-15s): Climax reveal, dramatic camera, final impact

one-take continuity:

@Image1 through @Image5, one continuous tracking shot following the subject up stairs, through corridors, ending with an overhead view.

the kling v3 pro camera reference

dolly push-in: intimacy, tension. "slow dolly push-in toward her face"

tracking shot: follow subject. "camera tracks alongside as she walks"

whip-pan: energy, surprise. "whip-pan to reveal the door"

crash zoom: shock, emphasis. "sudden crash zoom on the object"

rack focus: shift attention. "rack focus from foreground hand to background figure"

handheld shoulder-cam: raw, documentary. "handheld shoulder-cam with subtle sway"

static tripod: composed. "locked-off static tripod, wide shot"

demographic variations for higgsfield json

older woman (health offers targeting 50+):

- age: 55-65

- hair.color: "salt and pepper" or "silver grey"

- face.skin.texture: add "age lines, sun spots, natural aging"

- environment.location: "kitchen" with "morning coffee", "pill bottles on counter"

- pose: less selfie, more "caught on camera" natural

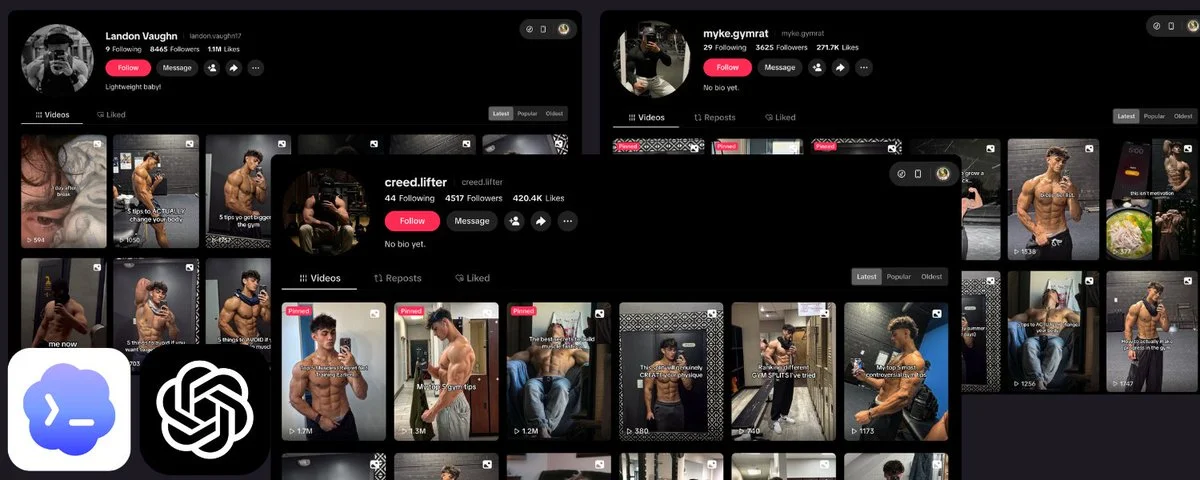

gym guy (fitness supplements):

- gender: "male"

- age: 28-35

- hair.style: "short, slightly sweaty"

- face.skin: "flushed from exercise, slight sweat"

- environment.location: "gym locker room" or "home gym"

- add clothing: "fitted tank top, visible fitness"

professional male (finance/tech):

- gender: "male"

- age: 30-45

- hair.style: "clean cut, professional"

- environment.location: "home office" with "monitor", "clean desk"

- camera.device: "webcam" for video-call aesthetic

the opportunity window

the demographic with actual purchasing power has zero detection ability for properly produced ai content.

the window is specific: 35-65+ year olds control 85% of household spending.

younger demographics are developing detection instincts fast. the buyers aren't.

every week more people figure out this stack. the arbitrage narrows.

you now have every prompt structure. every workflow. every cost breakdown. every failure mode.

the question is whether you'll implement it.

happy to work with @higgsfield on this